Géné-Pi

Mathematics of generative models

Preview

Claire Boyer, PR, Université Paris-Saclay

The Géné-Pi project aims to develop a unified theoretical framework to better understand and improve Transformer-type deep learning architectures and diffusion models. The goal is to increase their reliability, efficiency, and applicability in various contexts, particularly in self-supervised learning, data generation, and physics-constrained modeling.

Keywords: Transformer-based models, attention layers, diffusion-based generative models

Missions

Our researches

Understanding the role of optimization and its statistical impacts on deep models

Analyze how optimization trajectories (gradient descent, hyperparameter selection) induce implicit biases

that influence the generalization and robustness of models.

Combine statistical theory and optimization tools to jointly study optimization errors and statistical errors

on simplified but representative models.

Elucidating the internal mechanisms of Transformers

Understanding how Transformers learn to extract and structure information, and identifying situations where

attention mechanisms fail (head entanglement).

Study controlled statistical tasks (multi-location regression, clustering, self-supervision) and analyze

local and dynamic learning minima in order to propose algorithmic and architectural corrections.

Linking Transformers and classical statistical dimension reduction methods

Show how certain Transformer architectures learn representations similar to classical methods (PCA,

PLS), while offering greater flexibility.

Adopt a continuous view of attention layers as operators acting on distributions, and analyze their

learning by gradient descent in Gaussian and semi-Gaussian frameworks.

Deconstructing the “black box” of score matching in distribution models

Explain why and how diffusion models effectively learn complex laws without excessively memorizing data.

Study the role of implicit regularization induced by optimization, analyze scaling laws and their link to the stability and generalization of the learned score.

Improving sampling and integrating physical constraints into generative models

Make diffusion models more efficient, interpretable, and suitable for non-Euclidean, discrete, or

physically governed data.

Explore alternatives to isotropic Gaussian noise, develop diffusions compatible with discrete structures,

and integrate constraints from PDEs via kernel-based theoretical frameworks.

Consortium

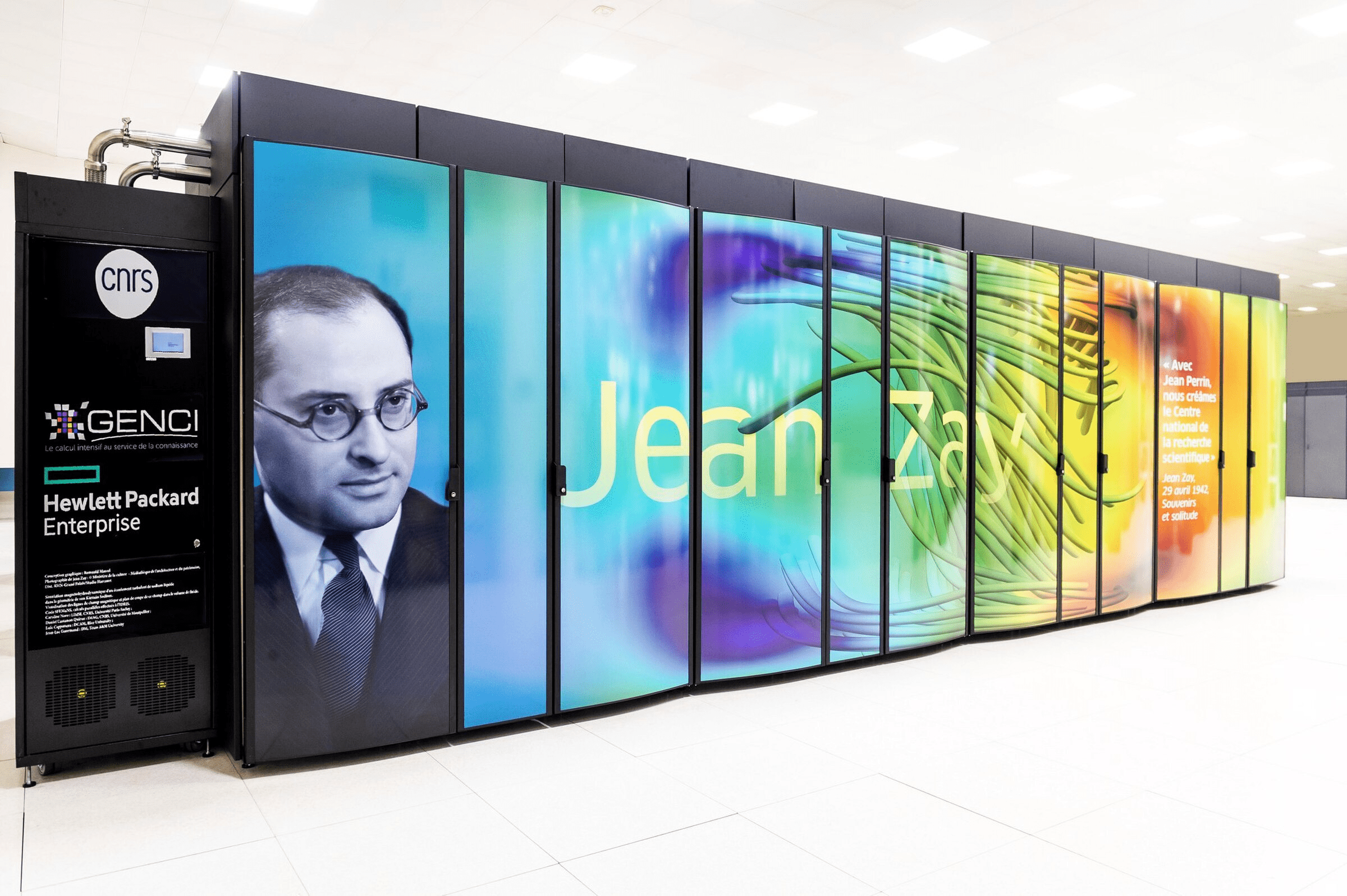

Université Paris-Saclay, Inria, Sorbonne Université

- Contributions promoted through articles in newspapers and major conferences in the field

- Training and recruitment of young scientists

- Organization of one or two international conferences on the subject

- Advancing fundamental understanding of AI models and consolidating France’s position as a leader in this field

- A better fundamental understanding of AI models, in order to make them more explainable and reliable

- Training for young scientists, particularly doctoral students and master’s students

- The development of specialized teaching on these topics at the master’s/doctoral level

A community of 20 researchers, teaching researchers, and permanent engineers, also involving 3 doctoral students, 2 postdoctoral researchers, and around ten collaborators as the project progresses.

Publication

Autres projets