MacLeOD

Machine learning on geometries and distributions

Preview

Rémi Flamary, professor, Ecole Polytechnique

Laetitia Chapel, professor, Institut Agro Rennes-Angers

The MacLeOD project aims to unify geometric and distribution-based deep learning by leveraging optimal transport to compare and transform complex data distributions on geometric spaces, developing efficient algorithms and novel architectures.

Keywords: Distributions, Geometric Deep Learning, Optimal Transport, Manifold, GraphsTransformer-based models, attention layers, diffusion-based generative models

Missions

Our researches

Modeling complex data distributions with novel Optimal Transport problems.

Existing OT problems are not adapted to the needs of modern ML models and we need new OT formulations that can adapt to the geometrical and distributional aspects of the data in modern (Geometric) DL.

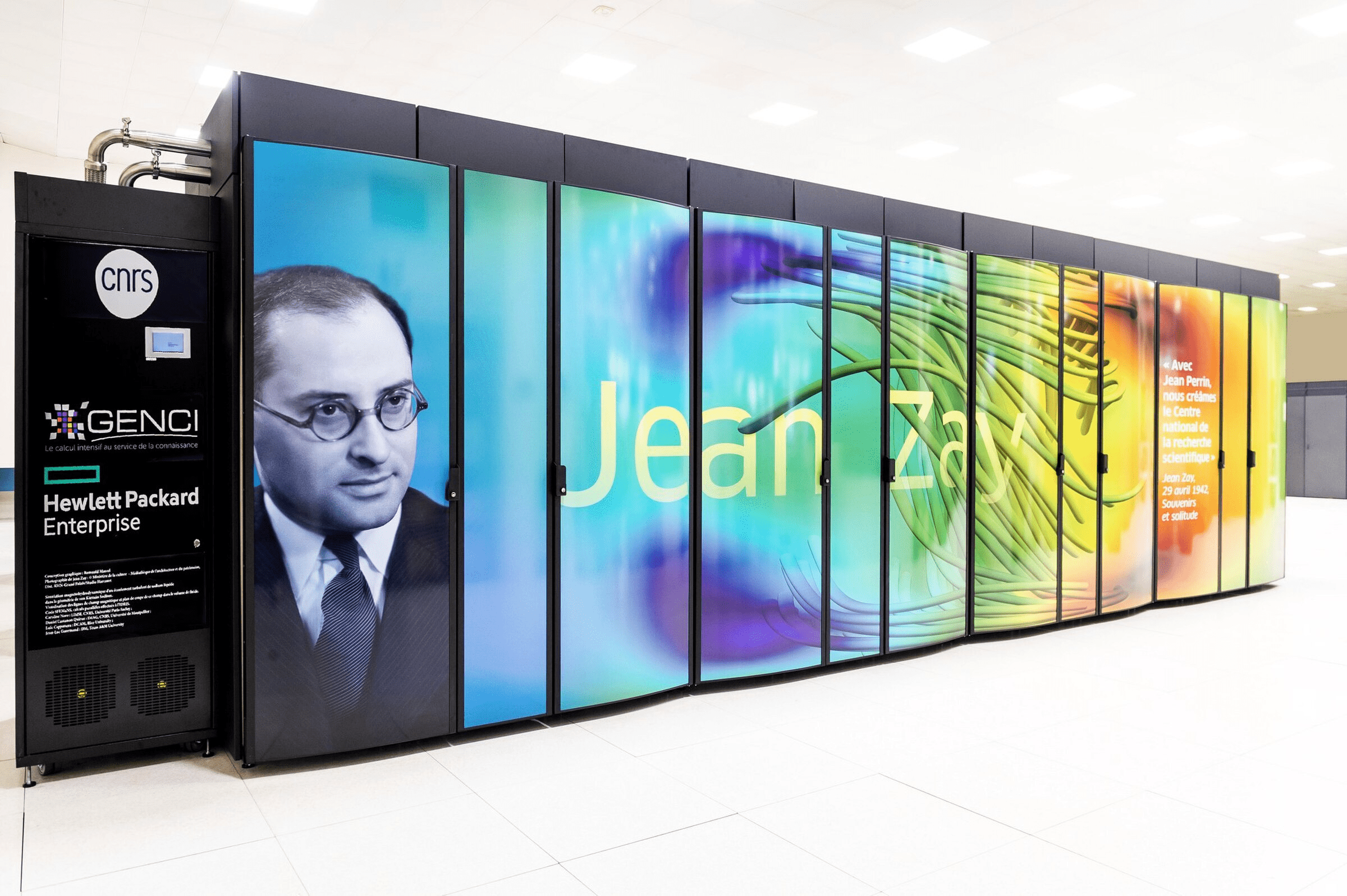

Investigating efficient algorithms for processing large scale distributions on manifolds.

The use of OT in ML and in particular in Deep Learning Layers is often limited by the computational cost of the OT problem. We need to develop new algorithms that can scale to the size of modern datasets such as Random Projections and Slicing methods.

Designing Deep Learning architectures encoding distributions on manifolds.

Deep Learning models, especially those processing distributions, are often restricted to process data living in a Euclidean space. There is thus a need for new frameworks that can process data efficiently on manifolds, from Riemannian to more general metric spaces.

Developing the field of Geometric Distributional Deep Learning for graph modeling.

Recent works have shown the power of combining GDL and distributional representation for graph processing but lack a general framework to model constraints or Dynamics from graphs through evolving distributions.

Use model/data distributions to analyze and interpret deep neural networks.

Deep NN are notoriously hard to analyze and devising new OT based methods to analyze the distribution and distributional operators along the layers will lead to better understanding of the models.

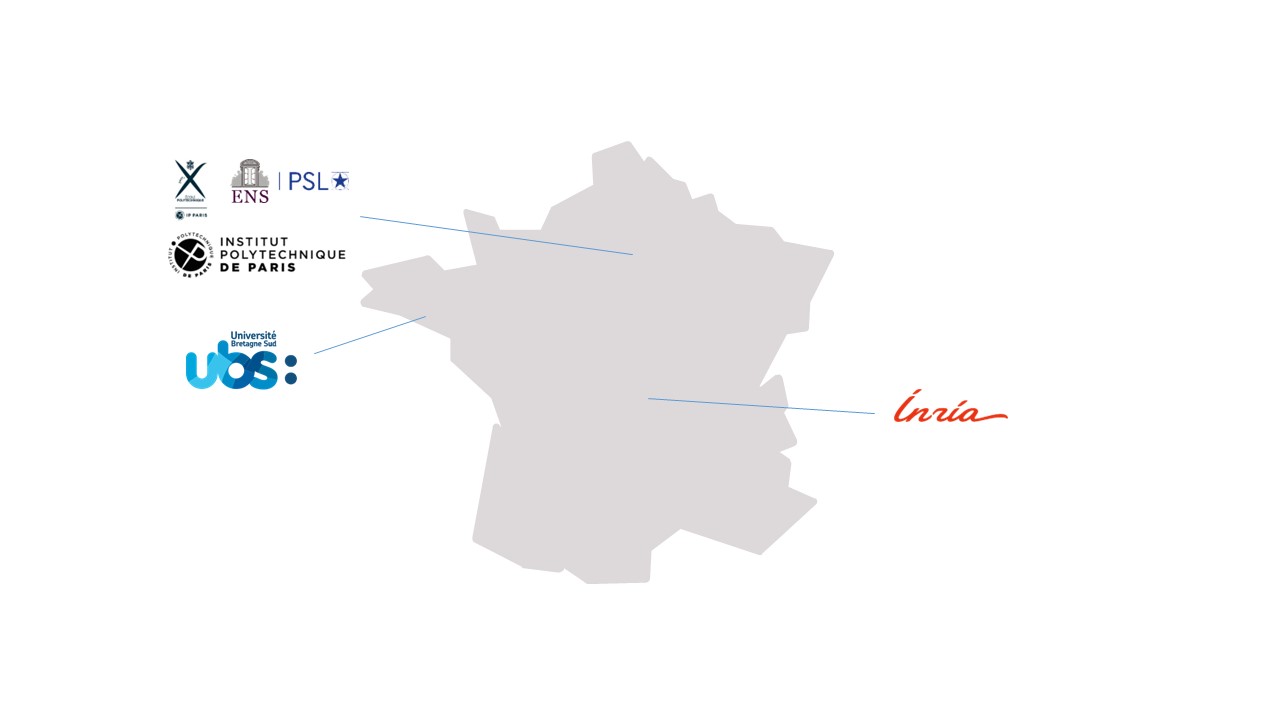

Consortium

Ecole/Institut Polytechnique de Paris, Université de Bretagne Sud, Ecole Normale Supérieure, Inria

Project MacLeOD will develop new frameworks, methods, and algorithms for deep learning on complex data, with potential impact across a wide range of applications. More precisely :

- Develop new methodologies for optimal transport for machine learning on complex geometries and objects

- Lay the foundations of distributional deep learning for graphs

- Design dedicated deep learning methods for distributions lying on manifolds

- Adress the complexity bottleneck of optimal transport by scaling optimal transport with slicing and random projections

The project will develop open-source software to make our methods available to the community and foster reproducible research.

By developing mathematically grounded methods and efficient algorithms, it enables a more faithful and robust analysis of complex data, thereby improving the quality of the learning systems. To ensure that the results have a positive societal impact, the project will promote the Ethics by Design approach, minimizing the potential misuse of our methods.

A scientific community of 2 researchers, 11 university staff, 6 PhD students, 1 research engineer and 2 post-doctoral students as the project progresses.

Publication

Autres projets