MadLearning

Deep Learning Mathematics: From Theory to Applications

Preview

François Malgouyres, professor, Institut de Mathématiques de Toulouse et Université de Toulouse

The MadLearning project explores the geometry of neural networks, as well as its impact on the optimization landscape and on the regularization of learned functions. It analyzes how the properties of the objective function influence the trajectories of stochastic algorithms, as well as those of the straight-through estimator. The theoretical results obtained are compared with practice and enrich the design of high-performance and efficient State-Space Models (SSMs), particularly for applications in computer vision and time series modeling.

Keywords : Neural network geometry, Optimization landscape, Implicit regularization, Stochastic gradient algorithm, Direct estimator, State-Space Models, Quantized neural networks

Missions

Our researches

Geometric study of neural networks

Study the local dimension of the image using a neural network sample, when the network parameters vary. This local dimension makes it possible to characterize both the regularity of the learned function and the geometry of the objective function.

Analyze this local dimension for different architectures, such as State-Space Models (SSMs), Transformers, and ResNet.

Highlight the specific properties of neural networks with low local dimension.

Study of stochastic algorithms

Study the behavior of different stochastic algorithms when the objective function has varied structures, particularly flat valleys whose bottoms are composed of local minima.

Study of the Straight-Through-Estimator

The straight-through estimator is the preferred algorithm for optimizing the weights of neural networks when they are constrained to quantized values. This approach is essential for designing efficient and/or embeddable models. However, its performance and behavior remain poorly understood.

Analyze this estimator under different assumptions concerning the properties of the objective function in order to shed light on its mechanisms.

Application to SSMs

State-Space Models (SSMs) are neural network architectures that can solve certain tasks more efficiently than competing architectures, while offering reduced algorithmic complexity.

Adapt this architecture to applications in computer vision and time series modeling and processing.

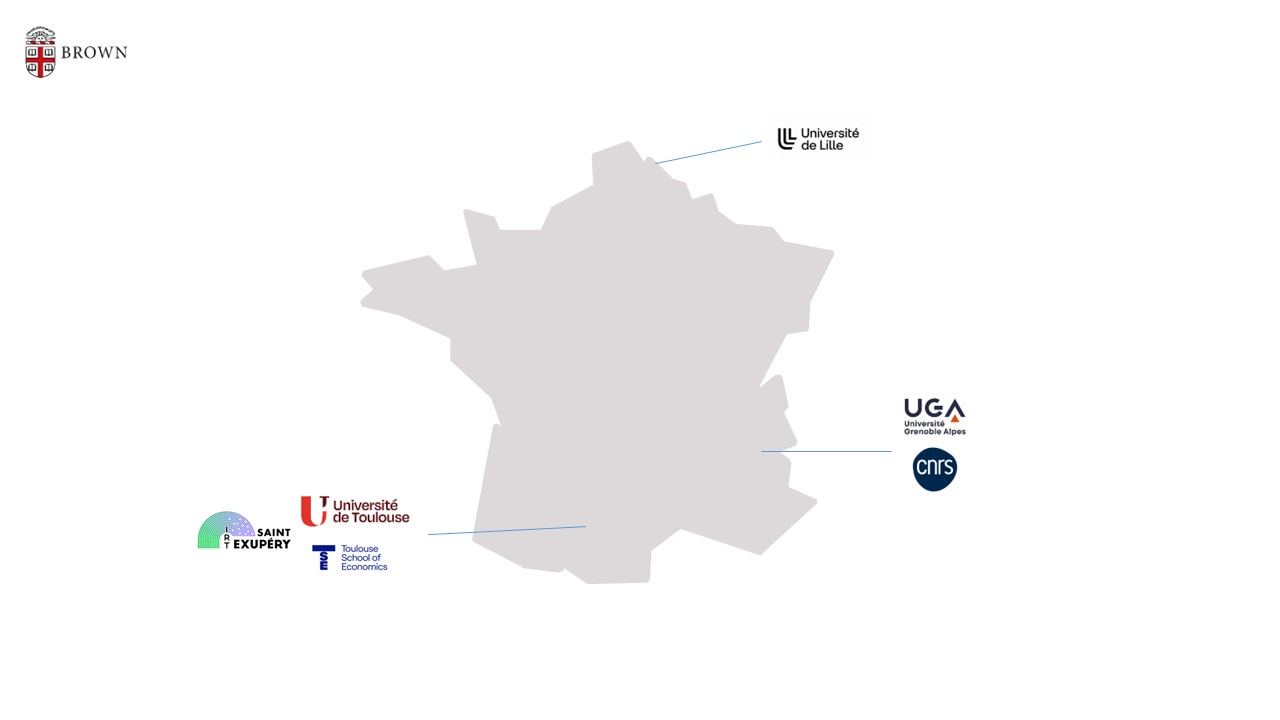

Consortium

Université de Toulouse (UT), Ecole d’Economie et de science sociale de Toulouse (TSE), Université Grenoble Alpes (UGA), CNRS, Université de Lille, IRT Saint-Exupéry, Brown university

- The results obtained will facilitate the selection and design of neural network architectures suited to targeted applications.

- They will facilitate the selection and design of algorithms and their parameters.

- They will contribute to a better understanding of the strengths and limitations of the direct estimator. This will not only enable it to be used more judiciously, but also allow for improvements to be made.

- The project will introduce new State-Space Model (SSM) architectures, as well as methods for their construction.

The MadLearning project will improve understanding of the impact of architecture and algorithm choices on learning performance. In doing so, it will facilitate the construction of high-performance, efficient next-generation AI.

A community of around ten researchers, teacher-researchers, and permanent engineers, also involving four doctoral students and one postdoctoral researcher as the project progresses.

Publication

Autres projets