MAGICALL

Mathematics of generative models: an interdisciplinary analysis of loss function landscapes

Preview

MAGICALL is a theoretical research project aimed at gaining an in-depth understanding of modern generative models and variational inference, combining mathematics, statistical physics, and optimization.

Giulio Biroli, professor, Ecole normale supérieure (ENS-PSL) – Laboratoire de Physique de l’ENS (LPENS)

The MAGICALL project focuses on the theoretical foundations of modern generative models, such as diffusion models and variational inference. It aims to analyze loss function landscapes, learning dynamics, and generalization properties in high-dimensional contexts. Using tools from statistical physics, statistics, and optimization, the project aims to provide theoretical frameworks on the efficiency, stability, and reliability of these methods.

Key words: Generative models, Loss landscapes, Diffusion

Missions

Our researches

Understanding the mechanisms of generalization of diffusion models

The project will theoretically analyze the transition between memorization and generalization using controlled probabilistic models (Gaussian mixtures, hierarchical structures), studying the impact of data size, dimension, and learning dynamics.

Analyzing learning dynamics and the geometry of loss landscapes

Tools from statistical physics and optimization will be used to characterize the loss function landscapes of generative models and relate their structure to the convergence, stability, and performance properties of training algorithms.

Characterize the formation of data structures during learning

The project will investigate how latent data structures (modes, hierarchies, relevant subspaces) gradually emerge during the training of generative models, linking these phenomena to optimization time scales and data complexity.

Analyze and develop methods to limit the phenomenon of “mode collapse.”

Strategies based on guided distribution paths, annealing, and over-parameterization will be studied theoretically and numerically in order to identify conditions that guarantee robust exploration of multimodal distributions.

Structure and lead an interdisciplinary community focused on the mathematics of generative AI

The project will organize seminars, PEPR IA working groups, international collaborations, and a summer school to promote exchanges between mathematicians, physicists, and researchers in machine learning.

Consortium

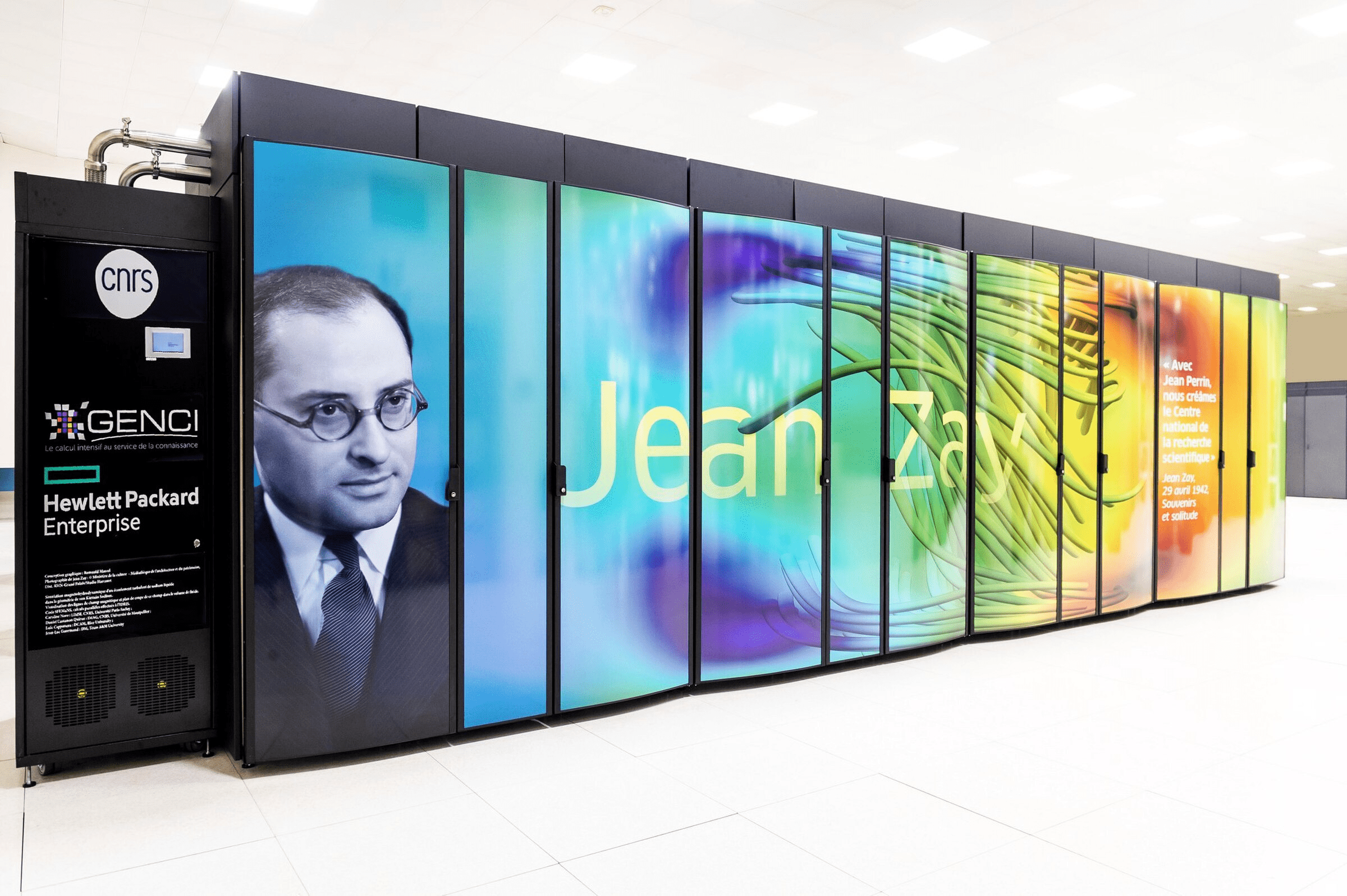

École normale supérieure (ENS-PSL), CNRS

- Theoretical advances in generative models such as diffusion and flow matching, particularly their generalization capabilities in high dimensions.

- Characterization of loss function landscapes and learning dynamics, linking geometry, optimization, and generation performance.

- Analysis of the formation of latent data structures during training, including the emergence of relevant modes, hierarchies, and subspaces.

- Development of theoretical frameworks to understand and limit the phenomenon of mode collapse, in particular through annealing strategies and guided dynamics.

Reducing the environmental footprint of AI, improving reliability and uncertainty quantification, contributing to privacy and trust issues.

A community of 3 researchers, teaching researchers, and permanent engineers, as well as 3 postdoctoral fellows as the project progresses.

Publication

Autres projets