PRODIGE-AI

PRObability, ranDom matrIx theory, Geometry and gEneralization for generative-AI

Preview

Developing rigorous mathematical foundations for the generalization problem in generative AI

Amaury Habrard, Professor, Université Jean Monnet Saint-Etienne

The PRODIGE-AI project aims to develop new theoretical models for more reliable, efficient, and transparent generative AI, focusing on three main areas. The first area aims to develop new theoretical frameworks to better understand the generalization capabilities of generative AI models. The second area focuses on developing innovative strategies for generative AI models that are both effective and explainable. The third and final area aims to design geometric frameworks for generative AI, with a particular focus on graphs.

The project draws on advanced mathematical tools, including probability theory, random matrices, and geometry, and focuses on diffusion models, flow matching, transformers, and state-space models. The project also aims to study entanglement in learned representations, as well as collapse and hallucination phenomena, in order to better understand the biases and limitations of generative models.

Keywords : Generative AI, generalization, statistical learning, probability theory, random matrix theory, information theory, graph theory, geometry.

Missions

Our researches

Better understanding the issue of generalization in generative AI

Derive generalization guarantees, particularly in the form of generalization bounds, using mathematical frameworks based on concentration theory, random matrices, or random tensors.

Identify key elements of “complexity” that characterize the generalization process and model creativity.

Derive self-certified algorithms, i.e., algorithms that directly minimize a generalization bound, by leveraging PAC-Bayes theory with the study of compression schemes.

Revisit diffusion and flow matching models from the perspective of the variational formulation of filter theory, continuous filtering, and the properties of velocity fields.

Improving the explainability and effectiveness of generative AI models

Use information theory and sensitivity analysis to disentangle generative factors and explore their causal relationships.

Exploit quantum information theory and random tensors to characterize collapse phenomena (mode collapse) and develop effective fine-tuning techniques for parameters.

Leverage random matrix theory and free probability theory to better understand and explain how generative AI models work.

Use the C* algebra framework to define new expressive and data-efficient models.

Developing geometric approaches for generative AI

Leverage invariant theory to construct graph embeddings and improve equivariant neural networks for generative AI.

Study generation capabilities based on metric properties of latent spaces, in particular by designing new distances that better capture geometric properties relevant to the learning process.

Design new approaches to distribution estimation on graph spaces to develop new generative graph models based on diffusion, flow matching, or optimal transport frameworks.

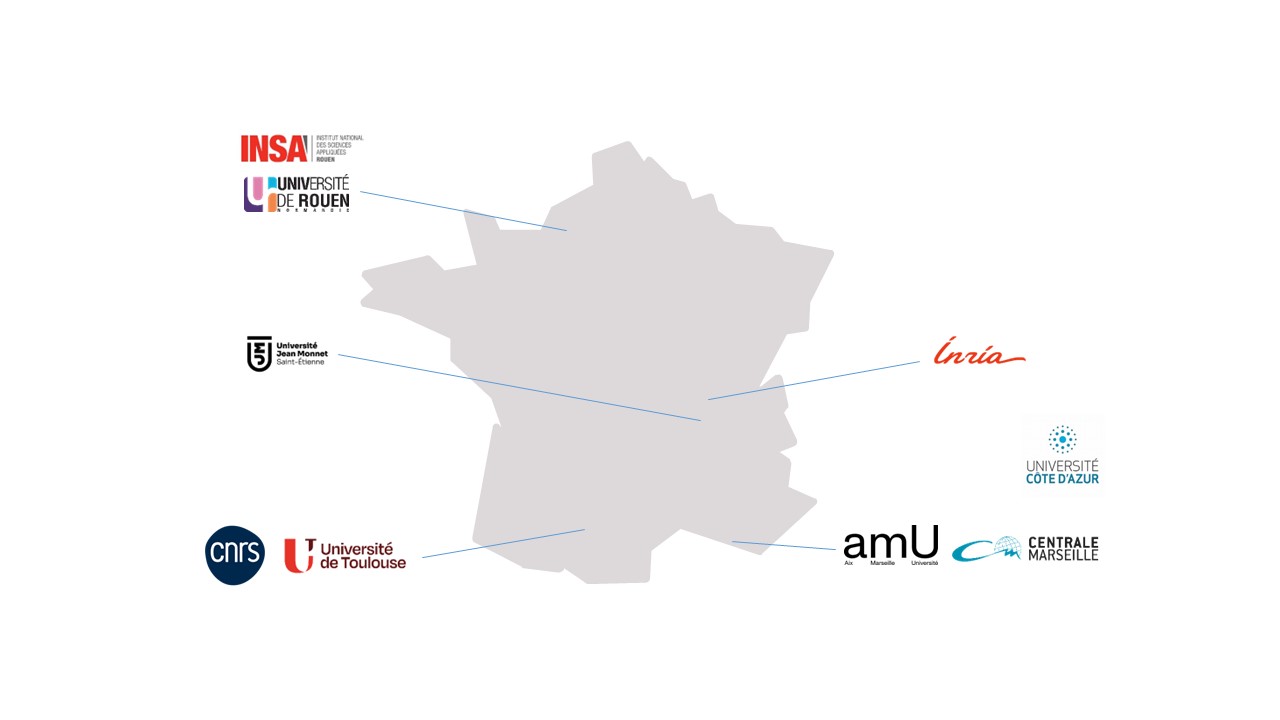

Consortium

Université Jean Monnet Saint-Etienne, CNRS, Inria, Université Côte d’Azur, Aix-Marseille Université, Université de Toulouse, Ecole Centrale de Marseille, INSA Rouen Normandie, Université de Rouen Normandie

- A better understanding of the generalization properties of generative AI models.

- A better characterization of specific phenomena such as creativity, entanglement, collapses, and hallucinations.

- The development of generative models that are both interpretable and effective, and the construction of meta-models to better understand and analyze their dynamics.

- The development of innovative geometric generative AI frameworks for graph generation and variety learning.

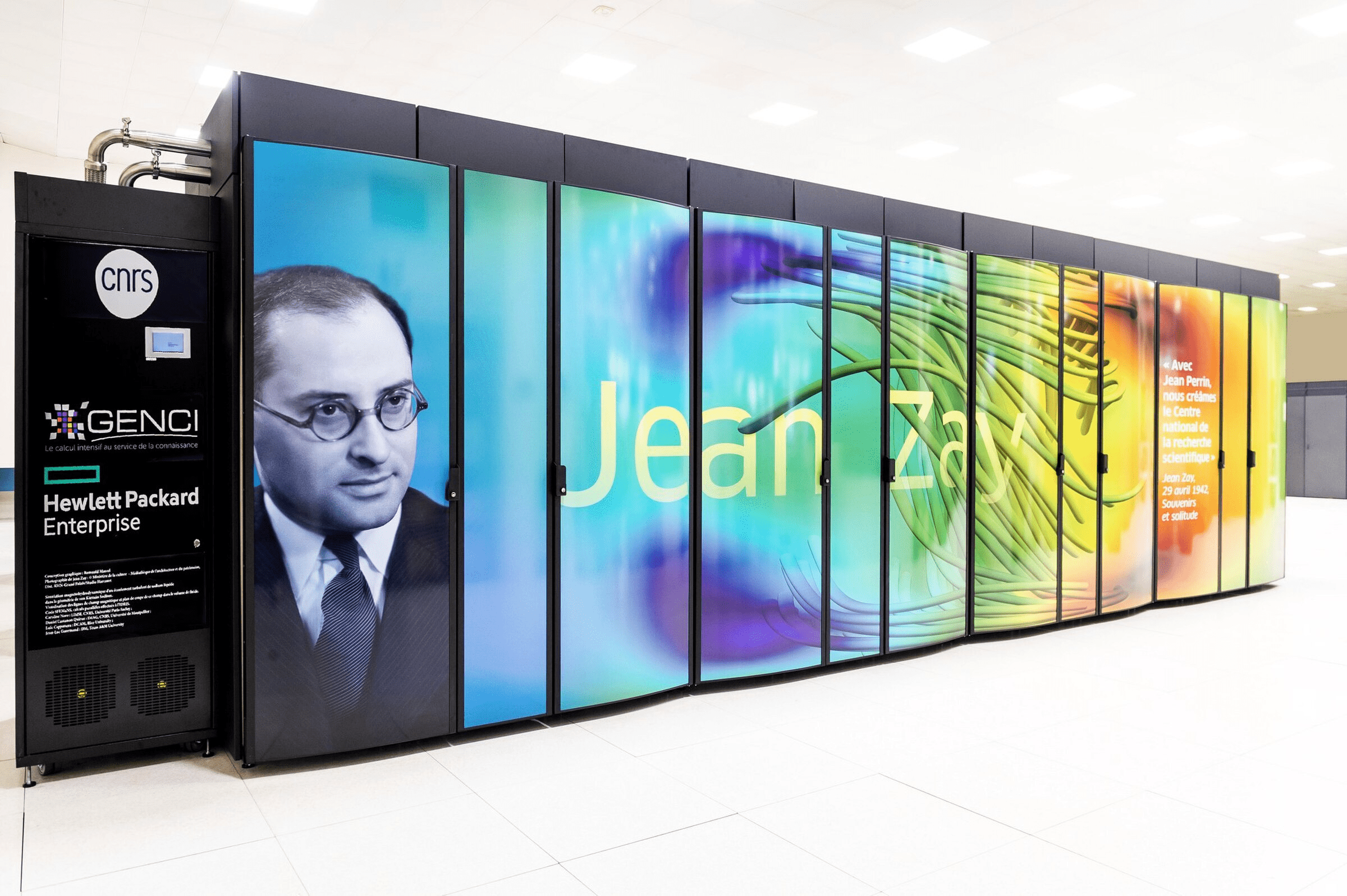

Thanks to fundamental developments in generative AI, the project aims to provide more reliable, transparent, and efficient generation mechanisms for images, text, and graphs. The project also aims to promote the development of new methodologies for document creation and analysis, as well as for applications in physics, chemistry, biology, health, etc. The project aims to reduce dependence on large technology companies and contribute to the development of open source tools and software.

A community of around thirty researchers, teaching researchers, and permanent engineers, also involving two doctoral students, three postdoctoral researchers, and five interns as the project progresses.

Publication

Autres projets