TENSOR4ML

TENSOR methods FOR mastering modern Machine Learning

Preview

Unlocking the full potential of tensor methods for compression and efficient training of modern AI models.

Konstantin Usevich, research scientist, CNRS

The TENSOR4ML project aims at revisiting the foundations of tensor methods for modern deep learning in context of generative models and scientific AI. The project will develop new frugal AI models based on low-rank approximation of tensors in novel tensor formats, taking care of particularities of applications, implementation and hardware constraints. The project gathers experts in machine learning, approximation theory, matrix and tensor analysis, optimization, and high performance computing.

Keywords : neural networks, tensor decompositions, low-rank approximation, geometry, frugality

Missions

Our researches

Revisit the usage of low-rank models for compression and training

Provide new learning and inference algorithms based on well-mastered tensor machinery, with low complexity and data requirements. We aim at understanding the interplay between low-rank compression and the performance of neural networks in order to choose best architectures and learning strategies.

Develop advanced tensor formats

Develop new tensor formats and neural network architectures such as compositions of nonlinear maps in tensor formats. They will require new approaches study their properties (such as identifiability, generalization, robustness, approximation properties).

Develop efficient learning and optimization techniques

Exploit the geometry of tensor formats and functional spaces, as well as leverage access to derivative evaluations of pretrained representations.

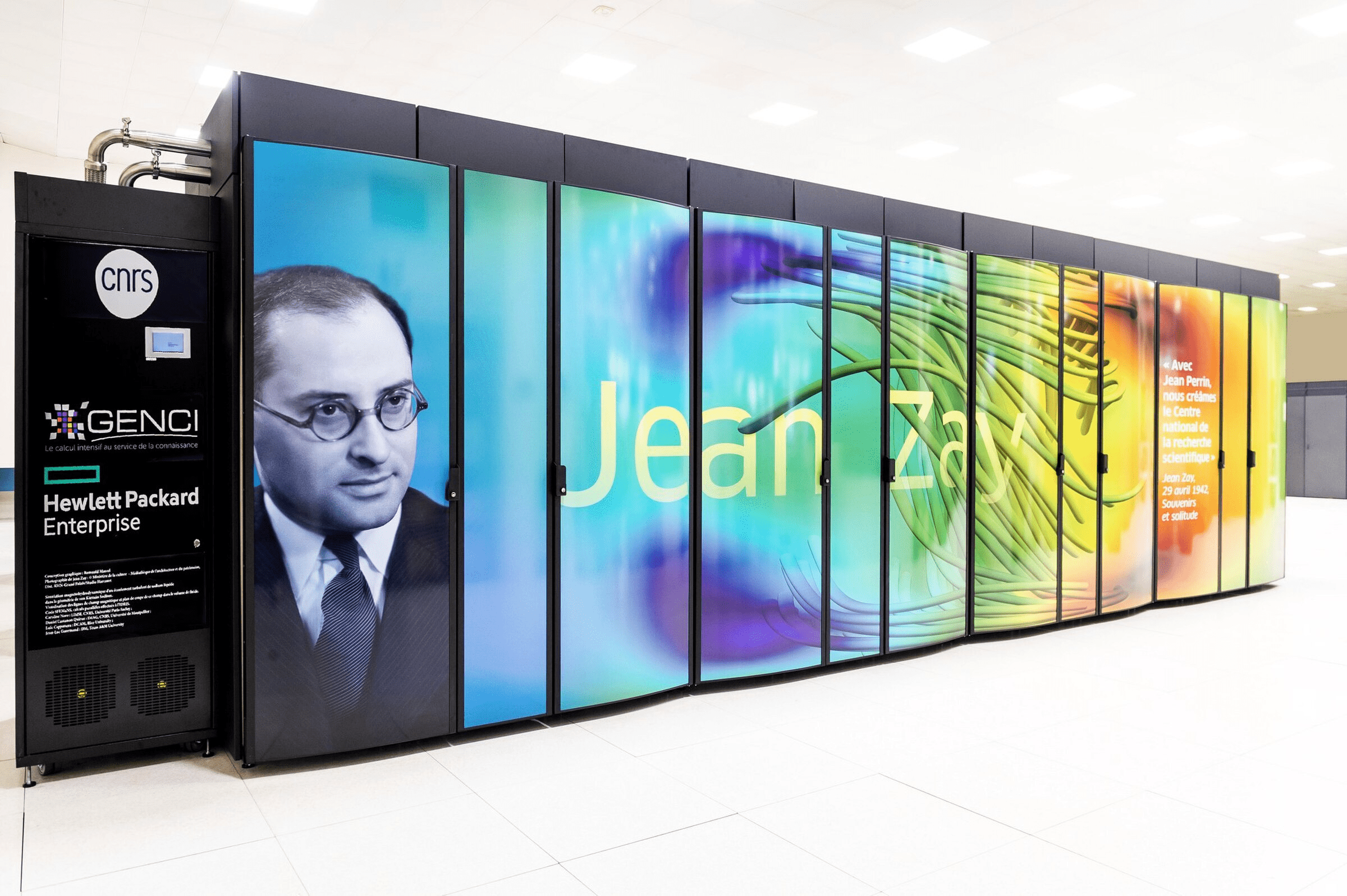

Speed up learning and inference

Employ high-performance computations, to meet the needs of high-dimensional applications in scientific and generative AI.

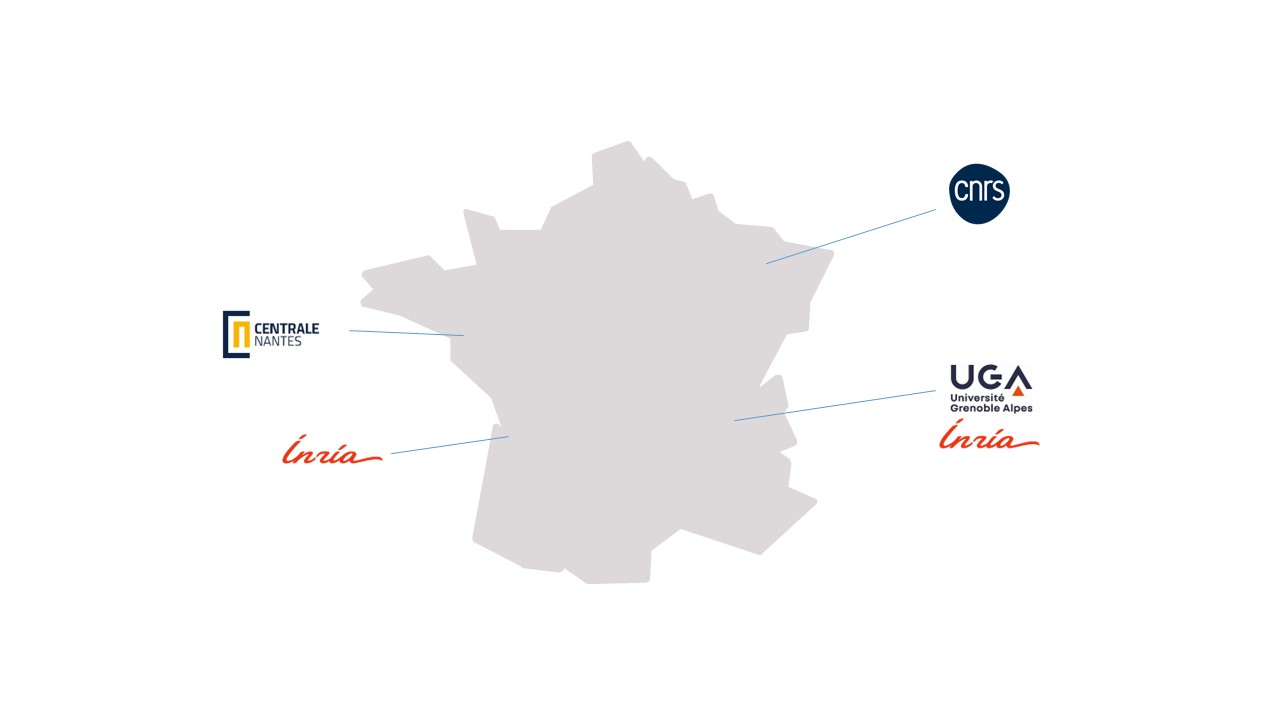

Consortium

CNRS (Nancy), INRIA (Bordeaux et Grenoble), Ecole Centrale Nantes

TENSOR4ML follows an interdisciplinary project ranging from mathematical foundations of frugal AI to algorithms and implementation. The expected outcomes of the project include:

- new error and robustness analysis for low-rank tensor approximations of deep neural networks

- new tensor formats and neural network architectures adapted to learning tasks

- novel efficient optimization and approximation methods for a range of architectures

- open-source high-performance tensor libraries for high-dimensional applications

TENSOR4ML tackles one of the key challenges of AI research – the cost of training and deployment of large AI models. The ultimate goal is to reduce the energy consumption and storage requirements of current AI methods and make them accessible to a larger community.

A community of around fifteen researchers, research professors, and permanent engineers, also involving two doctoral students, four postdoctoral researchers, and nine permanent researchers and research professors as the project progresses.

Publication

Autres projets